Edge AI applications, whether in airports, cars, military operations, or hospitals, rely on high-powered sensor streaming applications that enable real-time…

Edge AI applications, whether in airports, cars, military operations, or hospitals, rely on high-powered sensor streaming applications that enable real-time processing and decision-making. With its latest v0.5 release, the NVIDIA Holoscan SDK is ushering in a new wave of sensor-processing capabilities for the next generation of AI applications at the edge.

This release also coincides with the release of HoloHub, a GitHub repository for Holoscan developers to share and collaborate with reusable components and sample applications. HoloHub now features a wide range of new reference applications and operators intended to facilitate the creation and modification of data processing pipelines.

With HoloHub, we intend to provide a range of operators and applications for developers in various stages of prototyping, designing, developing, and testing AI streaming algorithms from I/O support to image segmentation and object detection. HoloHub is owned by NVIDIA and driven by an open community of developers sharing the building blocks that they’ve used to build industry-leading AI applications.

Highlights of the Holoscan v0.5 release:

- New operators and reference applications in HoloHub, including surgical tool detection and endoscopic tool segmentation.

- Capture, process, and archive video streams with H.264 encoder and decoder support

- X86_64 physical I/O support

- Depth map rendering

- Interoperability with GXF applications

- Support for integrated and discrete GPUs on NVIDIA developer kits

- A deployment stack for NVIDIA IGX Orin

- The holoscan-test-suite utility for use during manufacturing or verification and validation

New operators and reference applications in HoloHub

Among the new reference applications available in HoloHub are SSD surgical tool detection and MONAI endoscopic tool segmentation applications.

The MONAI endoscopic tool segmentation application has been verified on the GI Genius sandbox and is currently deployable to GI Genius Intelligent Endoscopy Modules, Cosmo Pharmaceutical’s AI-powered endoscopy system. The implementation with Cosmo showcases the fast and seamless deployment of Holohub applications on products/platforms running on NVIDIA Holoscan.

The SSD surgical tool detection application was developed to demonstrate the process of deploying an object detection model in the Holoscan SDK with custom post-processing steps. It contains a tutorial on exporting a trained PyTorch model checkpoint, modifying the exported ONNX model, creating your own post-processing logic in Python or C++ within the Holoscan SDK, visualizing bounding boxes and texts with Holoviz, and putting it all together in a Holoscan application.

With the intent of keeping the core of Holoscan SDK optimized and domain-agnostic, all Holoscan SDK sample applications were migrated to HoloHub and the build system was optimized to enable building each application against a pre-installed version of the SDK.

We developed a new basic network operator and its associated application. This basic network operator enables you to send and receive UDP messages over a standard Linux socket. Separate transmit and receive operators are provided so that they can run independently and better suit the needs of the application. The sample application takes the existing ping example that runs over Holoscan ports and instead uses the basic network operator to run over a UDP socket.

External contributions to HoloHub are welcome and encouraged.

DELTACAST

DELTACAST, a developer of professional video solutions for a range of broadcast, industrial, and medical applications, is one of the first to contribute to HoloHub with a Holoscan video processing application.

This application supports the DELTACAST.TV 12G SDI Key capture card, which can composite an overlay onto a video feed that is passed into and out of the card. This required the development of a new output operator in addition to modification of the existing input operator.

The resulting sample application can capture a video source stream and transmit the data directly into GPU memory using GPU Direct RDMA, where it can be processed through the endoscopy reference pipeline. It then uses RDMA to pass the output of the pipeline directly back to the capture card, where it can be blended with the low-latency source video.

Capture, process, and archive video streams for AI applications

Now available in HoloHub, four new native operators have been added to perform H.264 hardware-accelerated encoding and decoding with elementary stream write/read. These operators use the NV V4L2-based GXF extensions for H.264 support.

H.264 enables supporting AI-based use cases that capture, process, and archive video streams. It also adds the ability to use standard video formats instead of using the proprietary GXF Tensor format as an input to Holoscan applications. There is a drastic reduction in the size of sample Holoscan application packages when the input is an H.264 elementary stream.

Two C++ applications are available now in HoloHub to show the new H.264 encoding and decoding capabilities:

- The h264_video_decode application demonstrates the use of a simple workflow composed of the VideoReadBitream and VideoDecoder operators for reading an H.264 elementary stream from a specified input file and decoding it to a raw video frame.

- The h264_endoscopy_tool_tracking application is the modified version of endoscopy_tool_tracking application that replaces the GXF Tensor format input with an H.264 elementary stream. It also shows the use of the VideoEncoder and VideoWriteBitstream operators to encode raw video frames to H.264 and write the H.264 elementary stream directly to disk.

x86_64 physical I/O support

This new release of the Holoscan SDK brings support for physical I/O to x86 platforms.

The high-speed endoscopy application serves as a benchmark for low-latency 4K video at a frame rate of 240 Hz using high-speed, Ethernet-enabled cameras. It can now run on an x86_64 desktop. It has been tested with Rivermax 14.1.1310, GPUDirect RDMA (OFED 5.8.1), and cameras from Emergent Technologies.

Depth map rendering

In this new release, support for depth map rendering was added to HoloViz, the visualization component of Holoscan. Depth maps are described as 2D rectangular arrays where each element represents a depth value. The data is then rendered as a 3D object using points, lines, or triangles.

In the current implementation, the color of the elements can also be specified. Depth maps are rendered in 3D and support camera movement. Figure 1 shows a visualization of a depth map with colored texture from a single frame.

Figure 1. New depth map rendering with color elements

Interoperability with GXF applications

While the Holoscan SDK can wrap existing GXF extensions into Holoscan operators, it is also convenient to export native operators written in Holoscan to GXF to use them in existing GXF applications. Or, you can use the NVIDIA graph composer for a no-code experience.

Holoscan SDK v0.5 provides a helper CMake function to wrap Holoscan operators as GXF codelets. For more information, see Using Holoscan Operators in GXF Applications.

Support for integrated and discrete GPUs on Holoscan developer kits

The NVIDIA Clara AGX and NVIDIA IGX Orin developer kits are composed of an integrated GPU (iGPU) as well as one or more discrete GPUs (dGPUs). However, due to symbol redundancy between the nvgpu drivers for iGPU, and the open-source NVIDIA kernel module for Linux (OpenRM) drivers for dGPU, additional steps are required at this time to use them in parallel.

Starting with the Holoscan SDK v0.5, we provide a utility container on NGC: L4T Compute Assist. It is designed to enable iGPU compute access on the development stack (Holopack) configured for dGPU. This container enables using the iGPU for compute capabilities only and is not suitable for rendering.

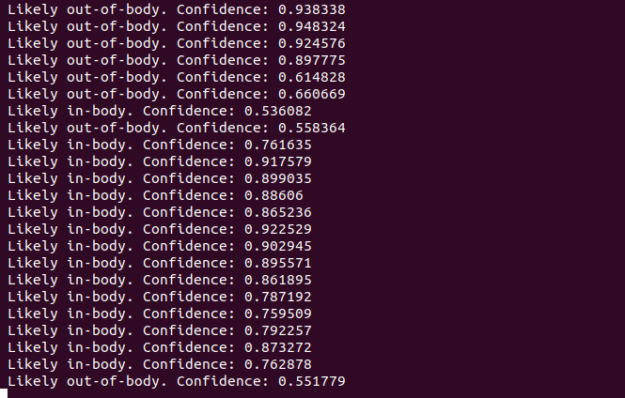

To show the capabilities of the L4T Compute Assist container, we released a new application for out-of-body detection in HoloHub. This application does not require a display and reports the results of the inference in the terminal. This application can run within the iGPU container simultaneously with other applications run on the dGPU.

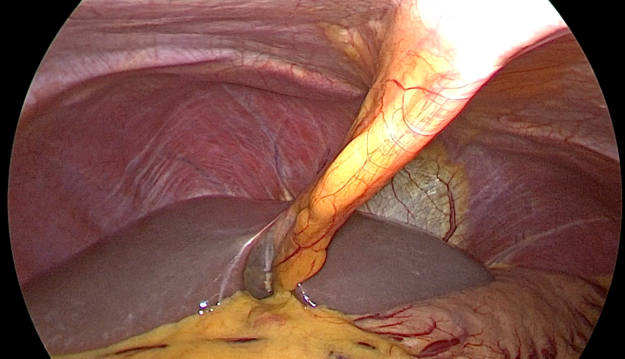

The following figures show the out-of-body detection application running and the input frame, which not displayed in the application.

a) Frame extracted from input video stream

a) Frame extracted from input video stream

b) Terminal output including confidence intervals

b) Terminal output including confidence intervals

Figure 2. Example frame and output for the out-of-body-detection application

Deployment stack for NVIDIA IGX Orin

As with previous Holoscan releases, this SDK release is accompanied by an updated version of the OpenEmbedded/Yocto layer for NVIDIA Holoscan. The layer provides a reference deployment platform that supports the Holoscan developer kits, Holoscan SDK, and HoloHub reference applications.

In addition to support for the new NVIDIA IGX Orin developer kit, this update to the deployment stack includes updates to core components:

- The underlying L4T platform and kernel

- Discrete GPU (dGPU) drivers

- CUDA

- cuDNN

- Mellanox OFED

- TensorRT

We also added support for the dGPU container runtime, PREEMPT_RT patch support, and Emergent high-speed GigE cameras.

Native debugging and application profiling are crucial for the preparation of production-ready deployments. To facilitate that, this update to the deployment stack also includes additional support for both local and remote debugging through GDB, as well as dGPU application profiling using NVIDIA Nsight Systems.

The deployment stack is provided both as a standalone source release on the meta-tegra-holoscan GitHub repo, as well as a complete build container hosted on NGC that includes all the dependencies and scripts to enable you to build and flash an image to a Holoscan devkit using just a few commands.

For more information about the full list of changes introduced by this deployment stack release, see the Holoscan OpenEmbedded/Yocto layer README and changelog.

holoscan-test-suite utility

Users of NVIDIA IGX Orin and derivative designs need testing support, both to verify the correct operation of assembled systems at manufacturing time and during development when changes are applied to hardware or software. Robust systems demand thorough testing that proves the system’s requirements and specifications are met. Applications that require submissions to regulatory bodies must provide not only test results but configuration data that makes it possible to entirely reconstruct the system under test.

The holoscan-test-suite utility provides a framework and a basic set of system verification scripts that is easy to use—both during manufacturing and at verification and validation—and it is easy for you to extend.

The test suite starts with a set of tests that are written in Python or shell scripts, adds a tool for generating a configuration report, and wraps that up with a simple web interface.

Python and shell scripts are wrapped into tests executed by the pytest framework, so running tests in a command-line or CI environment is straightforward. The test suite produces a YAML version of test results, making it easy to include in records associated with each manufactured system. You can modify existing tests and add new ones by cloning the /holoscan-test-suite repo, making the adoption of future testing from NVIDIA easy.

For more information, see the README file included with the project.

Get started with Holoscan SDK

The quickest way to get started with Holoscan SDK v0.5 is to run the Holoscan container and start exploring the examples.

For Python developers, Holoscan SDK is available using PyPi. The Holoscan SDK is also available as a Debian package.

Source:: NVIDIA