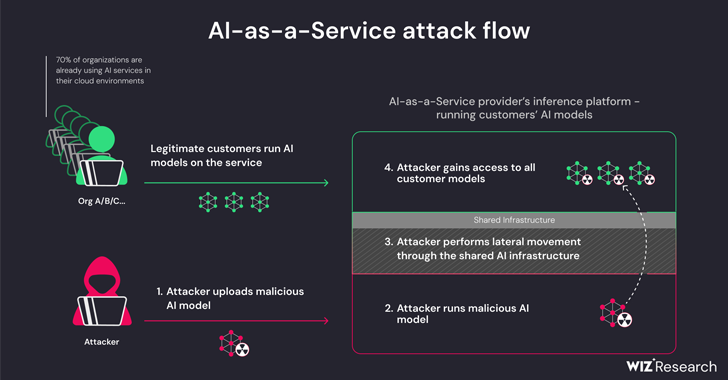

New research has found that artificial intelligence (AI)-as-a-service providers such as Hugging Face are susceptible to two critical risks that could allow threat actors to escalate privileges, gain cross-tenant access to other customers’ models, and even take over the continuous integration and continuous deployment (CI/CD) pipelines.

“Malicious models represent a major risk to AI systems,

Source:: The Hackers News