End clients are working on converged HPC quant finance and AI business solutions. Dell Technologies, along with NVIDIA, is uniquely positioned to accelerate…

End clients are working on converged HPC quant finance and AI business solutions. Dell Technologies, along with NVIDIA, is uniquely positioned to accelerate generative AI workloads and data analytics as well as high performance computing (HPC) quantitative financial applications where converged HPC quantitative finance plus AI workloads are the need of the hour for clients.

Dell and NVIDIA initially covered quantitative applications setting new records on NVIDIA Certified Dell PowerEdge XE9680 servers with NVIDIA GPUs. The system was independently audited by the Strategic Technology Analysis Center (STAC) on financial quantitative HPC workloads. For more information, see NVIDIA H100 System for HPC and Generative AI Sets Record for Financial Risk Calculations.

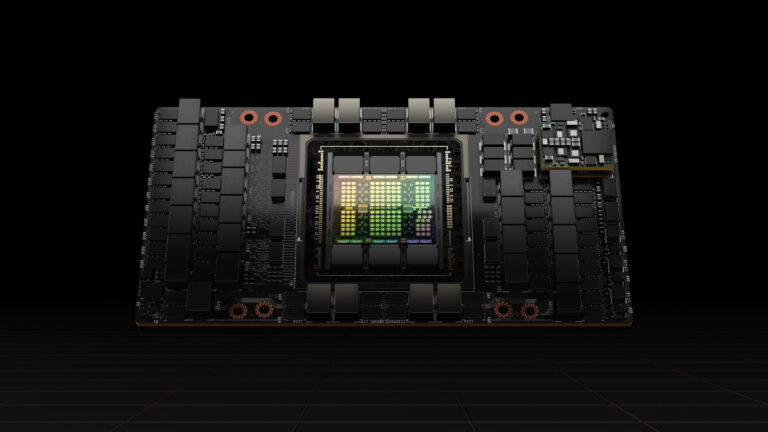

NVIDIA H00 Tensor Core GPUs were featured in a stack that set several records in a recent STAC-A2 audit with eight NVIDIA H100 SXM5 80 GiB GPUs, offering incredible speed with great efficiency and cost savings. Such systems are ideal for both HPC quantitative financial applications and AI deep learning neural net based workloads.

Designed by quants and technologists from some of the world’s largest banks, STAC-A2 is a technology benchmark standard based on financial market risk analysis. The benchmark is a Monte Carlo estimation of Heston-based Greeks for path-dependent, multi-asset options with early exercise.

STAC recently performed STAC-A2 Benchmark tests performance, scaling, quality, and resource efficiency. The stack under test (SUT) was a Dell PowerEdge XE9680 server with eight NVIDIA H100 SXM5 80 GiB GPUs. Compared to all publicly reported solutions to date, this system set numerous performance and efficiency records:

- The highest throughput (561 options / second) (STAC-A2.β2.HPORTFOLIO.SPEED)

- The fastest warm time (7.40 ms) in the baseline Greeks benchmark (STAC-A2.β2.GREEKS.TIME.[WARM | COLD])

- The fastest warm (160 ms) and cold (598 ms) times in the large Greeks benchmarks (STAC-A2.β2.GREEKS.10-100K-1260.TIME.[WARM | COLD])

- The most correlated assets (440) and Monte Carlo paths (316,000,000) simulated in 10 minutes (STAC-A2.β2.GREEKS.[MAX_ASSETS | MAX_PATHS])

- The best energy efficiency (364,945 options / kWh) (STAC-A2.β2.HPORTFOLIO.ENERG_EFF)

Compared to a liquid-cooled solution using four GPUs (INTC230927), this NVIDIA 8-GPU solution set the following records:

- 16% more energy-efficient (STAC-A2.β2.HPORTFOLIO.ENERG_EFF)

- 2.5x / 1.8x the speed in the warm / cold runs of the large Greeks benchmarks (STAC-A2.β2.GREEKS.10-100K-1260.TIME.[WARM | COLD])

- 1.2x / 7.5 x the speed in the warm / cold runs of the baseline Greeks benchmarks (STAC-A2.β2.GREEKS.TIME.[WARM | COLD])

- Simulated 2.4x the correlated assets and 316x the Monte Carlo paths in 10 minutes (STAC-A2.β2.GREEKS.[MAX_ASSETS | MAX_PATHS])

- 2.0x the throughput (STAC-A2.β2.HPORTFOLIO.SPEED)

Compared to a solution using eight NVIDIA H100 PCIe GPUs, as well as previous versions of the NVIDIA STAC Pack and CUDA, this solution using NVIDIA H100 SXM5 GPUs set the following records:

- 1.59x the throughput (STAC-A2.β2.HPORTFOLIO.SPEED)

- 1.17x the speed in the warm runs of the baseline Greeks benchmark (STAC-A2.β2.GREEKS.TIME.[WARM | COLD])

- 3.1x / 3.0x the speed in the warm / cold runs of the large Greeks benchmarks (STAC-A2.β2.GREEKS.10-100K-1260.TIME.[WARM | COLD])

- Simulated 10% more correlated assets in 10 minutes (STAC-A2.β2.GREEKS.[MAX_ASSETS | MAX_PATHS])

- 17% more energy-efficient (STAC-A2.β2.HPORTFOLIO.ENERG_EFF)

In addition to the hardware, NVIDIA provides all the key software component layers with the NVIDIA HPC SDK. This offers multiple options to developers, including NVIDIA CUDA SDK for CUDA/C++ and enabling other languages and directive-based solutions such as OpenMP, OpenACC, accelerations with C++ 17 standard parallelism, and Fortran parallel constructs.

This particular implementation was developed on CUDA 12.2 using the highly optimized libraries delivered with CUDA:

- cuBLAS: The GPU-enabled implementation of the linear algebra package BLAS.

- cuRAND: A parallel and efficient GPU implementation of random-number generators.

The implementation was supported by tools:

- NVIDIA Nsight Systems for timeline profiling

- NVIDIA Nsight Compute for kernel profiling

- NVIDIA Compute Sanitizer and CUDA-GDB for debugging

The different operations of the benchmark are implemented using building blocks from a set of quantitative finance reference implementations of state-of-the-art algorithms, including asset diffusion and Longstaff-Schwartz pricing. These are exposed in a modular and maintainable framework using object-oriented programming in CUDA/C++.

HPC plus AI extensions

End customers perform other price-discovery, market-risk calculations in their real-world workflow calculations:

- Sensitivity Greeks

- Profit and loss (P&L) calculations

- Value at risk (VaR)

- Margin and counterparty credit risk (CCR) calculations, such as credit valuation adjustment (CVA)

Pricing/risk calculation, algorithmic trading model development, and backtesting need a robust scalable environment. In areas such as CVA, such scaled setups on dense server nodes with eight NVIDIA GPUs have been shown to reduce the number of required nodes from 100 to 4 in simulation– and compute-intensive calculations (separately from STAC benchmarking). This reduces the total cost of ownership (TCO).

We are increasingly seeing a convergence of extended HPC quantitative finance and AI requirements from end users where end financial solutions use a combination of quantitative finance, data engineering and analytics, ML, and AI neural net algorithms.

The current solutions work with traditional quantitative models, extended with data engineering and analytics with accelerated machine learning (ML), such as XGBOOST, with tools such as NVIDIA RAPIDS, and AI deep learning. Deep learning involves long short-term memory (LSTM), recurrent neural networks (RNNs), and other advanced areas such as large language models (LLMs).

Also in today’s AI world, we have gone on to new topic areas such as generative AI. Generative AI refers to AI algorithms that enable computers to use existing or past content like text, audio, and video files, images, and even code to generate new content. Examples of these AI generative models include the range of generative pretrained transformer (GPT) models to diffusion models used in image generation.

More diverse HPC workloads are becoming prominent in financial areas using reinforcement learning (RL) and applied in financial areas such as limit order book price prediction.

Agent-based models are used to reproduce the interactions between economic agents, such as market participants in financial transactions. This is a trial and error process akin to a child learning the real world. The agent operates in an environment that has an end task with states (observable or not observed) and potential actions with positive and negative rewards to those other states with simulation. As a result, the states have a value function that is also iteratively updated based on the trail and error process. The agent must solve for the optimal policy behavior equivalent to strategy.

In most real-world problems, the optimal behavior is hard to determine. With a learnable policy, the optimal policy with its associated action can be evaluated as good or bad where the agent performs an action or set of actions with rewards and RL is the technique to maximize this reward.

Such RL algorithms have been extended to RL from human feedback (RLHF), which makes use of LLMs trained to optimize policy rewards, such as ChatGPT. NVIDIA provides tools to train similar RLHF-incorporated, GPT-based models.

Organizations can use such foundational LLMs on unstructured sources of information such as financial news along with customization techniques such as training, parameter-efficient fine-tuning, and fine-tuning to improve the LLMs for understanding the financial domain better.

End users can interface with such customized models combining it with techniques such as retrieval augmented Generation (RAG) to gain an information edge by querying and obtaining insights from unstructured data sources that are untapped for decision-making insights.

For example, these techniques can be used on news, financial documents, financial 10K or 10Q filings, and federal reserve commentary beyond traditional sources of tabular market data, to generate insights and signals, referred to as alternative data (alt data). These alt data information signals can be used to support systematic algorithmic trading models as well as discretionary traders.

Summary

The convergence of HPC and AI is happening as financial firms work on big-picture solutions. Stakeholders include both sell-side (global market banks and broker dealers) and buy-side (insurers, hedge funds, market-makers, high frequency traders, and asset managers). Solutions typically involve combining various modeling techniques, such as HPC quantitative finance, ML, RL, and NLP LLM generative AI models.

Due to the ability to cater to multiple HPC plus AI workloads, end clients are able to gain the maximum ROI and lower TCO by catering to all workloads including the largest and most demanding AI, ML, DL training, HPC modeling, and simulation initiatives.

Source:: NVIDIA