Soccer is considered one of the most popular sports around the world. And with good reason: the action is often intense, and the game combines both physicality…

Soccer is considered one of the most popular sports around the world. And with good reason: the action is often intense, and the game combines both physicality and skill from the players that can be thrilling to watch. So it should come as no surprise that there are folks out there who are working to teach robots the finer points of the game, including how to gather the ball, line up a shot, pass, and score a goal.

In fact, an entire competition is devoted to this very idea. The RoboCup Small Size League (SSL) Vision Blackout Technical Challenge encourages teams to “explore local sensing and processing rather than the typical approach of an off-board computer and a global set of cameras sensing the environment.” Student João Guilherme, his instructor Edna Barros, and other SSL teammates from the Federal University of Pernambuco in Recife, Brazil built an omnidirectional robot powered by the NVIDIA Jetson Nano Developer Kit to execute soccer tasks autonomously.

The team built their omnidirectional robot with a monocular camera that can autonomously perform the following tasks:

- Localization

- Soccer ball detection and grabbing

- Coordinate calculation

- Passing the ball to other team robots

- Scoring on an empty goal

The team built the robot with an AI software pipeline running at an average processing speed of 30 FPS, with the hardware consuming only around 10.8 W of power.

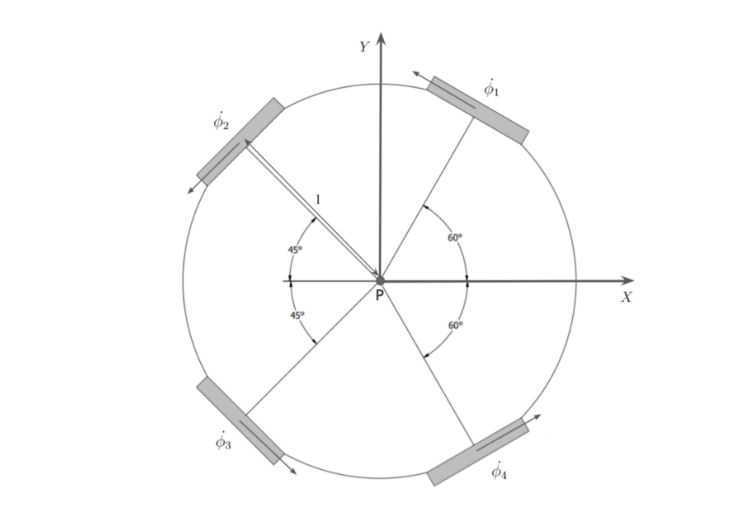

The robot has a kicking device on its front and is a four-wheeled omnidirectional robot. Figure 1 shows the geometry of the robot.

Figure 1. The movement capabilities of the omnidirectional robot powered by the NVIDIA Jetson Nano Developer Kit to execute soccer tasks autonomously

Figure 1. The movement capabilities of the omnidirectional robot powered by the NVIDIA Jetson Nano Developer Kit to execute soccer tasks autonomously

“We evaluate our system on three soccer tasks: grabbing a ball, scoring a goal, and passing the ball, achieving 80%, 80%, and 46.7% success rates, respectively,” the team explains in Towards an Autonomous RoboCup Small Size League Robot.

During tournament play, teams will use off-field computers to execute most of the computation, receiving the position of the ball and gathering field geometry information and referee commands. The matches are played between teams of six (division B) and 11 (division A) robots, and the robots receive navigation commands through RF communication with minimal bandwidth. The diameter and height of the robots are limited to 180 millimeters (division B) and 150 millimeters (division A), hence the name Small Size League.

The SSL RoboCup competitions include four stages:

In addition, this challenge requires the robot to detect objects in the field, estimate their position, compute navigation paths, and keep records of past trajectories.

“SSL matches are highly dynamic environments with extremely resource-constrained robots, requiring solutions to consider size, power consumption, accuracy, and processing speed trade-offs. This work presents an architecture that enables these robots to execute basic soccer tasks autonomously, that is, without receiving any external information,” according to Guilherme and his teammates in Towards an Autonomous RoboCup Small Size League Robot.

Project hardware

The team used the following hardware in their project:

- A Jetson Nano Developer Kit, to perform embedded vision and decision making

- An omnidirectional robot

- A Logitech C922 camera, to provide monocular vision

- Inertial sensors, to implement odometry estimation

- An STM32F767ZI microcontroller unit (MCU), to receive target relative positions and navigation flags from the Nano and execute low-level control and trajectory estimation using inertial odometry

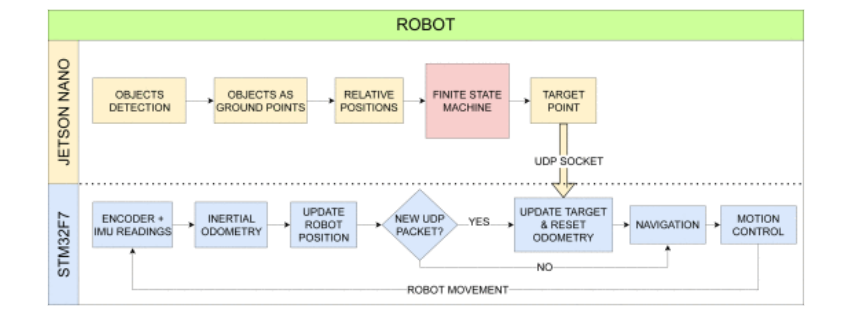

Figure 2. The AI detection pipeline and movement planning of the soccer robot

Figure 2. The AI detection pipeline and movement planning of the soccer robot

For more information about the hardware used, see RobôCIn 2020 Team Description Paper.

Technical challenges

During the competition’s Vision Blackout Challenge, the winning robot must be able to complete a variety of soccer-based skills, including grabbing a stationary ball, scoring on an empty goal, moving to specific coordinates, and scoring an indirect goal (passing to another robot).

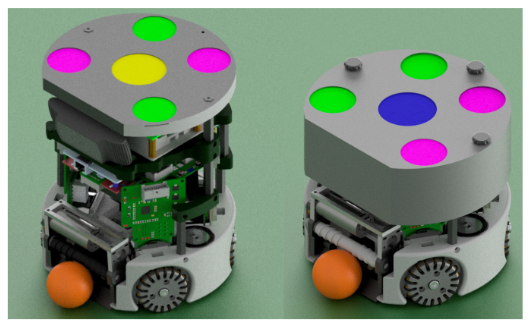

The robot must be able to perform these skills using only embedded sensing and processing. There are no height restrictions for this challenge, so the team added an onboard camera, the Jetson Nano, and a power supply board on top of their typical robot.

Figure 3. The team’s soccer-playing robot modified for the Vision Blackout Challenge (left) and their original robot (right)

Figure 3. The team’s soccer-playing robot modified for the Vision Blackout Challenge (left) and their original robot (right)

In addition, this challenge requires the robot to detect objects in the field, estimate their position, compute navigation paths, and keep records of past trajectories. The SSL soccer matches make use of external cameras and offboard computers for perceiving the environment and sending commands to the robots.

According to the researchers, the SSL Vision architecture “presents limitations such as the camera’s field-of-view, color segmentation, software latency, and communication dropouts, forcing teams to develop solutions for dealing with complex conditions. For example, one common problem during matches is ball occlusion, which occurs when a robot’s projection on the camera image overlaps the ball. Another issue is that the ball and robot position flicks, occasionally not detecting or falsely detecting them.”

In the SSL contests, the robots and balls achieve up to 3.7 m/s and 6.5 m/s velocities, respectively, resulting in a fast-moving game requiring high-throughput solutions. Additionally, the size limitations coupled with using a battery as a power source require solutions to have low-power consumption. Also, precise kicks and passes over long distances are performed during matches, requiring accurate position estimations.

The team also noted the importance of accurate motor control, so the robot can move across the soccer field and keep its measured position accurate. The team needed a way to reduce the rate at which the robot’s internal understanding of its position diverges from its actual physical position. For more details, see Towards an Autonomous RoboCup Small Size League Robot.

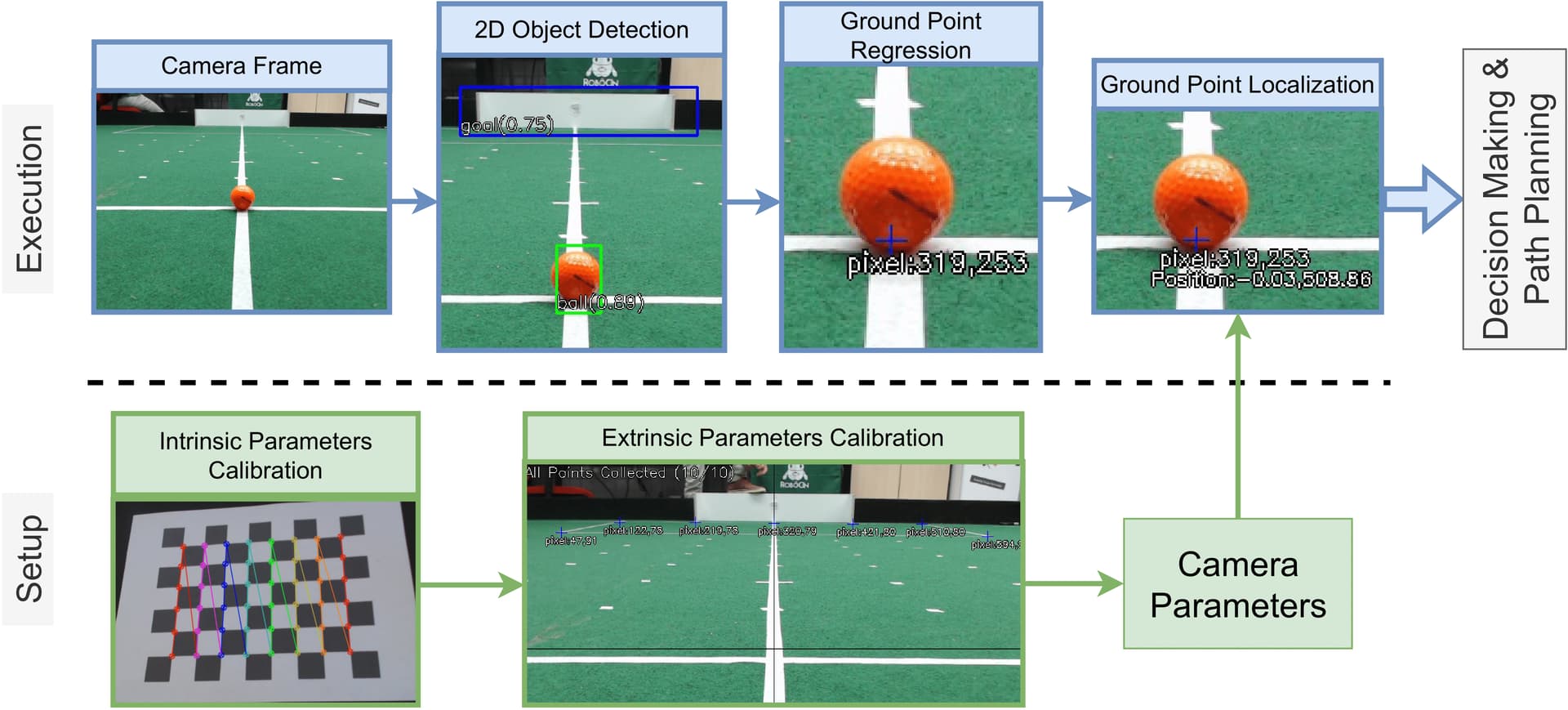

Figure 4. The soccer robot’s camera aids object detection along with field of vision for decision making and path planning

Figure 4. The soccer robot’s camera aids object detection along with field of vision for decision making and path planning

Project software and AI

The team used OpenCV2 and calibration and pose computation techniques to extract the “intrinsic and extrinsic parameters” of the monocular camera (fixed to the robot). They used SSD MobileNet v2 to detect objects’ 2D bounding boxes on camera frames. They also used a program applying linear regression to the bounding box coordinates created by SSD MobileNet that was used to estimate precalibrated camera parameters. This would assign points on the field corresponding to the object’s bottom center (which has an object’s relative position to the camera), and therefore to the robot, too.

Results

The team is pleased with how their robot played in this year’s challenge. Highlights include:

- Grabbing a stationary ball: In 12 out of 15 attempts, the robot was able to stop with the ball touching its dribbler, an 80% success rate.

- Scoring a goal: A goal was scored in 12 of the 15 runs.

- Passing: The robot passed the ball in 7 of the 15 tries, resulting in a 46.7% success rate.

Visit RoboCup 2023 Results to see the full list of results. The team has participated in the RoboCup Small Size League since 2019, winning their first world title in 2022 (Division B). They are currently a three-time Latin American champion. RobôCIn Small Size League Extended Team Description Paper for RoboCup 2023 presents the improvements the team made to their project for the Small Size League (SSL) division B title in RoboCup 2023 in Bordeaux, France in late July, when they took first place.

Figure 5. The robot grabbing a stationary ball (left) and scoring a goal (right)

Future plans

Guilherme shared some insights about challenges their team encountered in competition, and opportunities for improvement for future events. He noted that most of the failures were due to false-positive detections from objects outside the field. “We are working on a solution for detecting the field boundaries and applying a mask to discard those objects,” he said.

The team needs faster object detection solutions. “Even though we are able to execute basic skills so far, 30 FPS is still a low processing speed for the SSL environment. At the main competition, cameras usually operate at 70 FPS,” he said.

The robot’s skills were implemented using only relative positions from detected objects–that is, without the knowledge of the robot’s self-localization on the field. “We believe this information might be useful for optimizing our performance in the soccer tasks, while also allowing us to avoid penalties,” Guilherme noted. For example, the robot should not enter the goalkeeper’s area. “We are working on a self-localization algorithm based on Monte Carlo Localization (MCL) and will share it in the coming months.”

The team plans to add more features to the robot’s system in the future (such as field line detection, localization algorithms, and path planning), and they will be working to optimize each part of the system for those needs.

In addition, the team continues to work on solutions for detecting field boundaries and lines, and estimating the robot’s self-localization. They also plan to replace the Jetson Nano with a Jetson Orin Nano so they can achieve faster processing speeds with their robot. That upgrade should help the team compete more effectively in league play.

To learn more about the team’s original project, visit the Developer Forum and GitHub. Explore Jetson Community Projects for more ideas and inspiration from your fellow robotics developers.

Source:: NVIDIA