New research is boosting the creative potential of generative AI with a text-guided image-editing tool. The innovative study presents a framework using…

New research is boosting the creative potential of generative AI with a text-guided image-editing tool. The innovative study presents a framework using plug-and-play diffusion features (PnP DFs) that guides realistic and precise image generation. With this work, visual content creators can transform images into visuals with just a single prompt image and a few descriptive words.

The ability to edit and generate content reliably and with ease has the potential to expand creative possibilities for artists, designers, and creators. It could also strengthen industries reliant on animation, visual design, and image editing.

“Recent text-to-image generative models mark a new era in digital content creation. However, the main challenge in applying them to real-world applications is the lack of user-controllability, which has been largely restricted to guiding the generation solely through input text. Our work is one of the first methods to provide users with control over the image layout,” said Narek Tumanyan, a lead author and Ph.D. candidate at the Weizmann Institute of Science.

Recent breakthroughs in generative AI are unlocking new approaches for developing powerful text-to-image models. However, complexities, ambiguity, and the need for custom content limit current rendering techniques.

The study introduces a novel approach using PnP DFs that improves the image editing and generation process, giving creators greater control over their final product.

The researchers start with a simple question: How is the shape, or the outline of an image, represented and captured by diffusion models? The study explores the internal representations of images as they evolve over the generation process and examines how these representations encode shape and semantic information.

The new method controls the generated layout without training a new diffusion model or tuning it, but rather by understanding how spatial information is encoded in a pretrained text-to-image model. During the generation process, the model extracts diffusion features from an introduced guidance image and injects them into each step of the generation process resulting in fine-grained control over the structure of the new image.

By incorporating these spatial features, the diffusion model refines the new image to match the guidance structure. It does this iteratively, updating image features until it lands on a final image that preserves the guide image layout while also matching the text prompt.

“This results in a simple and effective approach, where features extracted from the guidance image are directly injected into the generation process of the translated image, requiring no training or fine-tuning,” the authors write.

This method paves the way for more advanced controlled generation and manipulation methods.

Video 1. An overview of the study “Plug-and-Play Diffusion Features for Text-Driven Image-to-Image Translation” being presented at the 2023 Conference on Computer Vision and Pattern Recognition (CVPR)

The researchers developed and tested the PNP model with the cuDNN-accelerated PyTorch framework on a single NVIDIA A100 GPU. According to the team, the large capacity of the GPU made it possible for them to focus on method development. The researchers were awarded an A100 as recipients of the NVIDIA Applied Research Accelerator Program.

Deployed on the A100, the framework transforms a new image from the guidance image and text in about 50 seconds.

The process is not only effective but also reliable, producing stunning imagery accurately. It also works beyond images, translating sketches, drawings, and animations, and can modify lighting, color, and backgrounds.

Figure 1. Sample results of the method preserving the structure of the guidance origami image while matching the description of the target prompt (credit: Tumanyan, Narek, et al./CVPR 2023)

Figure 1. Sample results of the method preserving the structure of the guidance origami image while matching the description of the target prompt (credit: Tumanyan, Narek, et al./CVPR 2023)

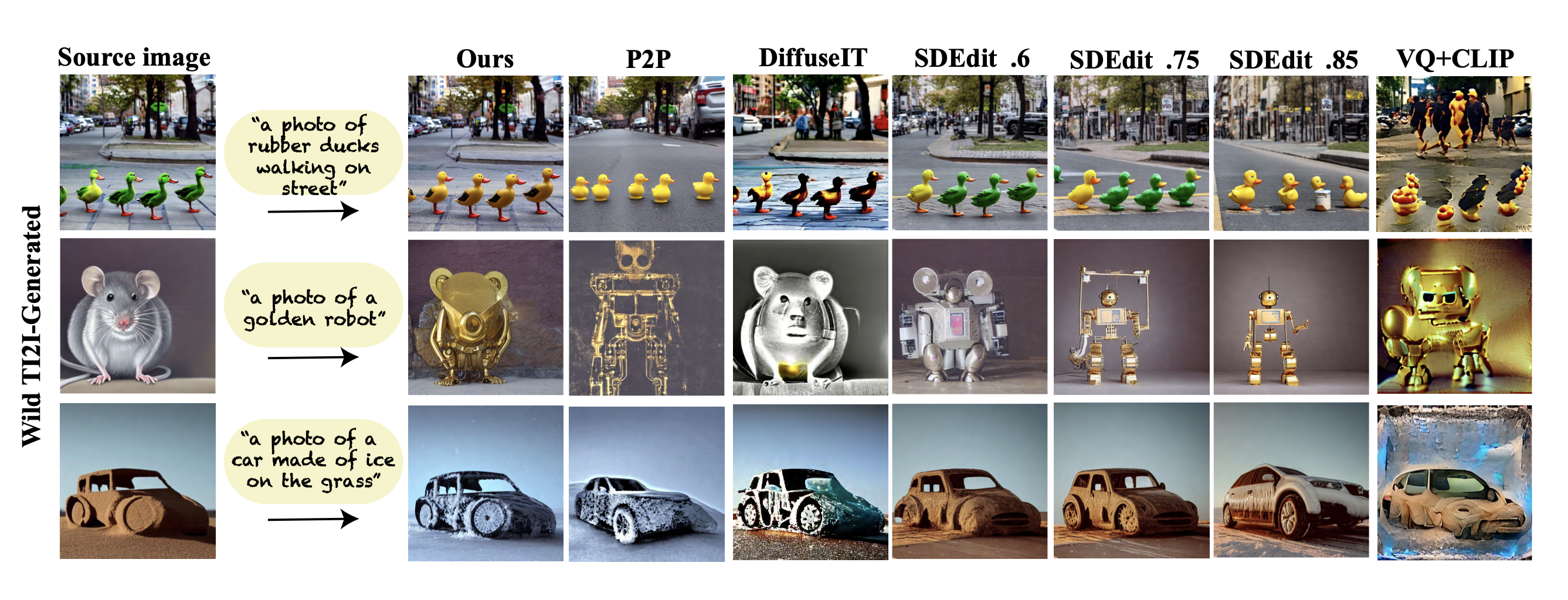

Their method also outperforms existing text-to-image models, achieving a superior balance between preserving the guidance layout and deviating from its appearance.

Figure 2. Sample results comparing the model with P2P, DiffuseIT, SDedit with three different noising levels, and VQ+CLIP models (credit: Tumanyan, Narek, et al./CVPR 2023)

Figure 2. Sample results comparing the model with P2P, DiffuseIT, SDedit with three different noising levels, and VQ+CLIP models (credit: Tumanyan, Narek, et al./CVPR 2023)

However, the model does have limitations. It does not perform well when editing image sections with arbitrary colors, as the model cannot extract semantic information from the input image.

The researchers are currently working on extending this approach to text-guided video editing. The work is also proving valuable to other research harnessing the powers of analyzing the internal representations of images in diffusion models.

For instance, one study is employing the team’s research insights to improve computer vision tasks, such as semantic point correspondence. Another focuses on expanding text-to-image generation controls, including the shape, placement, and appearance of objects.

The research team, from the Weizmann Institute of Science, is presenting their study at the CVPR 2023. The work is also open source on GitHub.

Learn more on the team’s project page.

Read the study Plug-and-Play Diffusion Features for Text-Driven Image-to-Image Translation.

See NVIDIA research delivering AI breakthroughs at CVPR 2023.

Source:: NVIDIA