The pace of 5G investment and adoption is accelerating. According to the GSMA Mobile Economy 2023 report, nearly $1.4 trillion will be spent on 5G CAPEX,…

The pace of 5G investment and adoption is accelerating. According to the GSMA Mobile Economy 2023 report, nearly $1.4 trillion will be spent on 5G CAPEX, between 2023 and 2030. Radio access network (RAN) may account for over 60% of the spend.

Increasingly, the CAPEX spend is moving from the traditional approach with proprietary hardware, to virtualized RAN (vRAN) and Open RAN architectures that can benefit from cloud economics and do not require dedicated hardware. Despite these benefits, Open RAN adoption is struggling because existing technology has yet to deliver the benefits of cloud economics, and cannot deliver high performance and flexibility at the same time.

NVIDIA has overcome these challenges with the NVIDIA AX800 converged accelerator, delivering a truly cloud-native and high-performance accelerated 5G solution on commodity hardware that can run on any cloud (Figure 1).

To benefit from cloud economics, the future of the RAN is in the cloud (RAN-in-the-Cloud). The road to cloud economics aligns with Clayton Christensen’s characterization of disruptive innovation in traditional industries as presented in his book, The Innovator’s Dilemma: When New Technologies Cause Great Firms to Fail. That is, with progressive incremental improvements, new and seemingly inferior products are able to eventually capture market share.

Existing Open RAN solutions currently cannot support non-5G workloads and still deliver inferior 5G performance. They are mostly still using single-use hardware accelerators. This limits their appeal to telecom executives, as the comparative performance of traditional solutions delivers a tried-and-tested deployment plan for 5G.

However, the NVIDIA Accelerated 5G RAN solution based on NVIDIA AX800 has overcome these limitations and is now delivering comparable performance to traditional 5G solutions. This paves the way to deploy 5G Open RAN on commercial-off-the-shelf (COTS) hardware at any public cloud or telco edge.

Figure 1. NVIDIA AX800 converged accelerator and NVIDIA Aerial SDK deliver a compelling advantage for the Open RAN ecosystem

Figure 1. NVIDIA AX800 converged accelerator and NVIDIA Aerial SDK deliver a compelling advantage for the Open RAN ecosystem

Solutions to support cloud-native RAN

To drive broad adoption of cloud-native RAN, the industry needs solutions that are cloud native, deliver high RAN performance, and are built with AI capability.

Cloud native

This approach delivers better utilization, multi-use and multi-tenancy, lower TCO, and increased automation—with all the virtues of cloud computing and benefiting from cloud economics.

A cloud-native network that benefits from cloud economics requires a complete rethink in approach to deliver a network that is 100% software-defined, deployed on general-purpose hardware and can support multi-tenancy. As such, it is not about building bespoke and dedicated systems in the public or telco cloud managed by Cloud Service Providers (CSPs).

High RAN performance

High RAN performance is required to deliver new technology—such as massive MIMO with its improved spectral efficiency, cell density, and higher throughput—all with improved energy efficiency. Achieving high performance on commodity hardware that is comparable to the performance of dedicated systems is proving a formidable challenge. This is due to the death of Moore’s Law and the relatively low performance achieved by software running on CPUs.

As a result, RAN vendors are building fixed-function accelerators to improve the CPU performance. This approach leads to inflexible solutions and does not meet the flexibility and openness expectations for Open RAN. In addition, with fixed-function or single-use accelerators, the benefits of cloud economics cannot be achieved.

For example, software-defined 5G networks that are based on Open RAN specifications and COTS hardware are achieving typical peak throughput of ~10 Gbps compared to >30 Gbps peak throughput performance on 5G networks that are built in the traditional, vertically integrated, appliance approach using bespoke software and hardware.

According to a recent survey of 52 telco executives reported in Telecom Networks: Tracking the Coming xRAN Revolution, “In terms of obstacles to xRAN deployment, 62% of operators voice concerns regarding xRAN performance today relative to traditional RAN.”

AI capability

Solutions must evolve from the current application, based on proprietary implementation in the telecom network, toward an AI-capable infrastructure for hosting internal and external applications. AI plays a role in 5G (AI-for-5G) to automate and improve system performance. Likewise, AI plays a role, together with 5G (AI-on-5G), to enable new features in 5G and beyond.

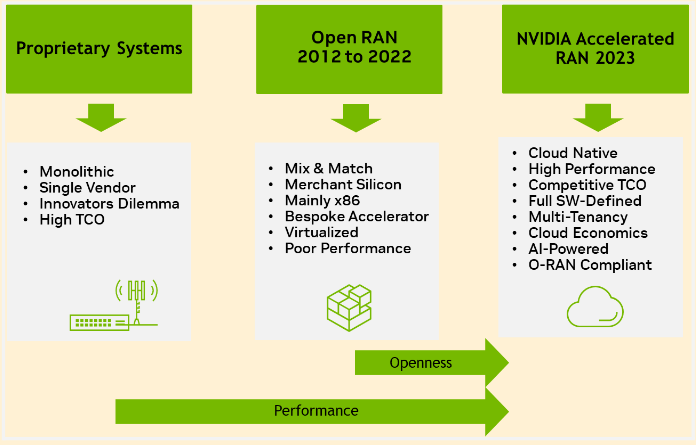

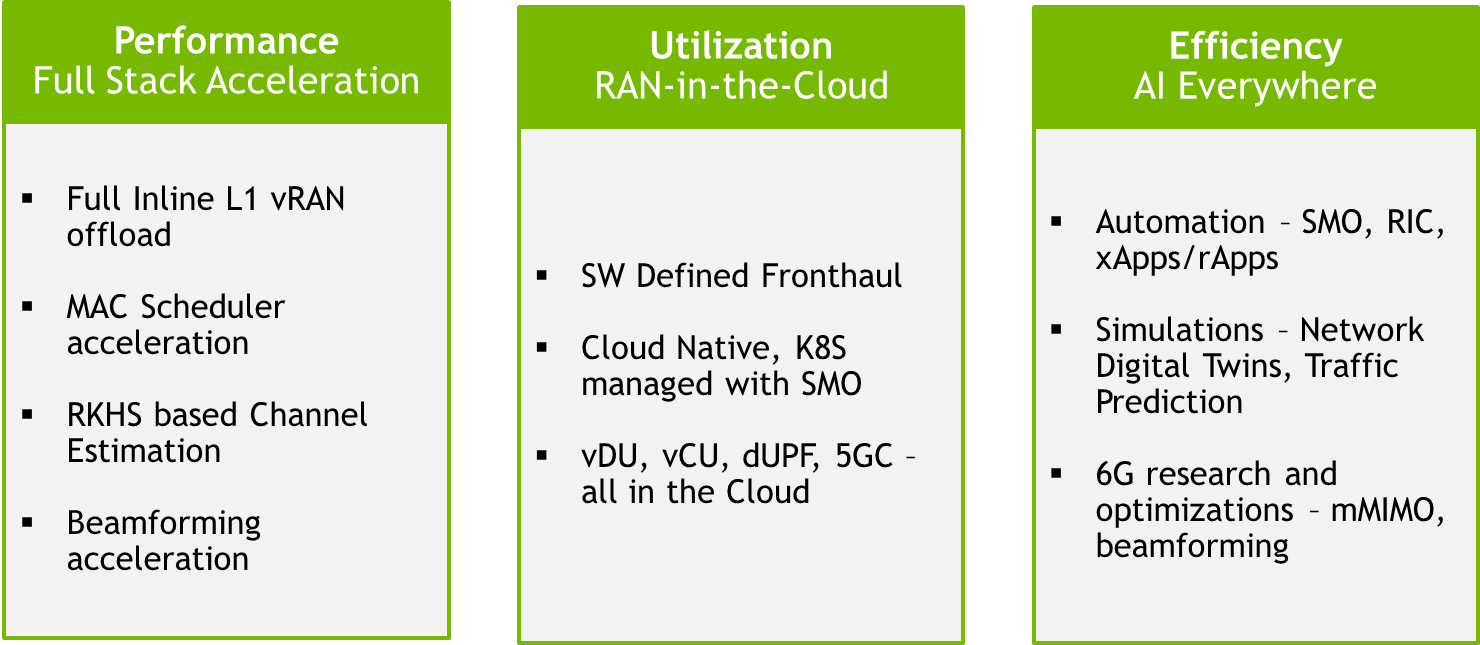

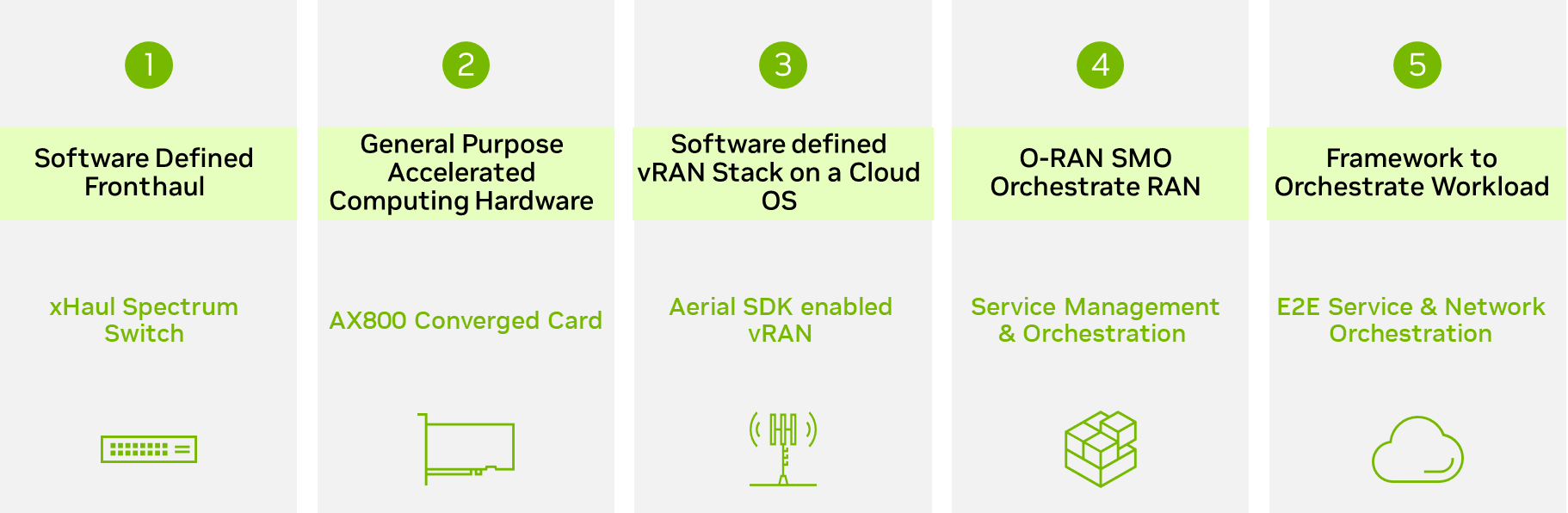

Achieving these goals requires a new architectural approach for cloud-native RAN, especially with a general-purpose COTS-based accelerated computing platform. This is the NVIDIA focus, as summarized in Figure 2.

The emphasis is on delivering a general-purpose COTS server built with NVIDIA converged accelerators (such as the NVIDIA AX800) that can support high-performance 5G and AI workloads on the same platform. This will deliver cloud economics with better utilization and reduced TCO, and a platform that can efficiently run AI workloads to future proof the RAN for the 6G era.

Figure 2. NVIDIA technological innovations transforming the RAN

Figure 2. NVIDIA technological innovations transforming the RAN

Run 5G and AI workloads on the same accelerator with NVIDIA AX800

The NVIDIA AX800 converged accelerator is a game changer for CSPs and telcos because it brings cloud economics into the operations and management of telecom networks. The AX800 supports multi-use and multi-tenancy of both 5G and AI workloads on commodity hardware, that can run on any cloud, by dynamically scaling the workloads. In doing so, it enables CSPs and telcos to use the same infrastructure for both 5G and AI with high utilization levels.

Dynamic scaling for multi-tenancy

The NVIDIA AX800 achieves dynamic scaling, both at the data center and at the server and card levels, enabling support of 5G and AI workloads. This scalable, flexible, energy-efficient, and cost-effective approach can deliver a variety of applications and services.

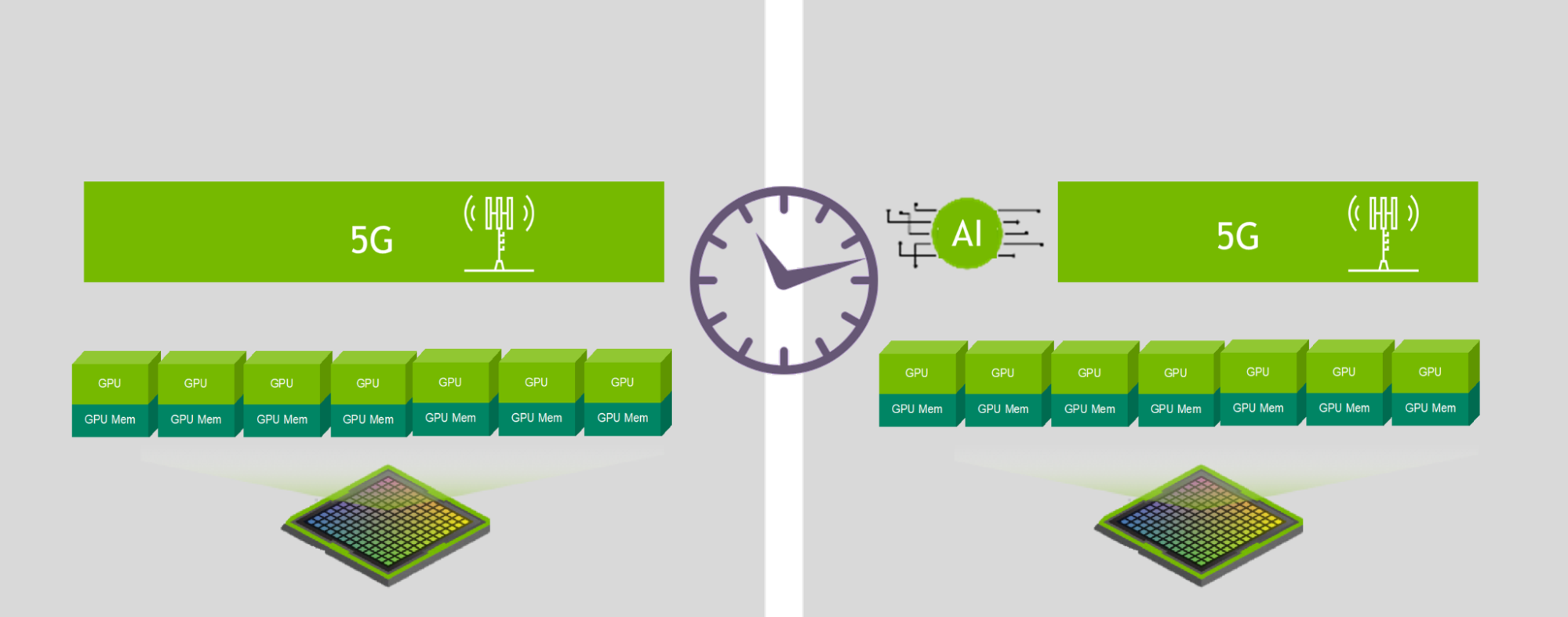

At the data center and server levels, the NVIDIA AX800 supports dynamic scaling. The Open RAN service and management orchestration (SMO) is able to allocate and reallocate computational resources in real time to support either 5G or AI workloads.

At the card level, NVIDIA AX800 supports dynamic scaling using NVIDIA Multi-Instance GPU (MIG), as shown in Figure 3. MIG enables concurrent processing of virtualized 5G base stations and edge AI applications on pooled GPU hardware resources. This enables each function to run on the same server and accelerator in a coherent and energy-conscientious manner.

This novel approach provides increased radio capacity and processing power, contributing to better performance and enabling peak data throughput processing with room for future antenna technology advancements.

Figure 3. NVIDIA Multi-Instance GPU redistribution based on workload and resource requirements

Figure 3. NVIDIA Multi-Instance GPU redistribution based on workload and resource requirements

Commercial implications of dynamic scaling for multi-tenancy

The rationale for pooling 5G RAN in the cloud (RAN-in-the-Cloud) is straightforward. The RAN constitutes the largest CAPEX and OPEX spending for telcos (>60%). Yet the RAN is also the most underutilized resource, with most radio base stations typically operating below 50%.

Moving RAN compute into the cloud brings all the benefits of cloud computing: pooling and higher utilization in a shared cloud infrastructure resulting in the largest CAPEX and OPEX reduction for telcos. It also supports cloud-native scale in scale out and dynamic resource management.

Dynamic scaling for multi-tenancy is commercially significant in three ways. First, it enables deployment of 5G and AI on general-purpose computing hardware, paving the way to run the 5G RAN on any cloud, whether on the public cloud or the telco edge cloud (telco base station). As all general computing workloads migrate to the cloud, it is clear that the future of the RAN will also be in the cloud. NVIDIA is a leading industry voice to realize this vision, as detailed in RAN-in-the-Cloud: Delivering Cloud Economics to 5G RAN.

Second, dynamic scaling leverages cloud economics to deliver ROI improvements to telecom networks. Instead of the typical TCO challenges with single-use solutions, multi-tenancy enables the same infrastructure to be used for multiple workloads, hence increasing utilization.

Telcos and enterprises are already using the cloud for mixed workloads, which are spike-sensitive, expensive, and consist of many one-off “islands.” Likewise, telcos and enterprises are increasingly using NVIDIA GPU servers to accelerate edge AI applications. The NVIDIA AX800 provides an easy path to use the same GPU resources for accelerating the 5G RAN connectivity, in addition to edge AI applications.

Third, the opportunity for dynamic scaling using NVIDIA AX800 provides marginal utility to telcos and CSPs who are already investing in NVIDIA systems and solutions to power their AI (especially generative AI) services.

Current demand for NVIDIA compute, especially to support generative AI applications, is significantly high. As such, once the investment is made, deriving additional marginal utility from running 5G and generative AI applications together massively accelerates the ROI on NVIDIA accelerated compute.

Figure 4. NVIDIA RAN-in-the-Cloud building blocks

Figure 4. NVIDIA RAN-in-the-Cloud building blocks

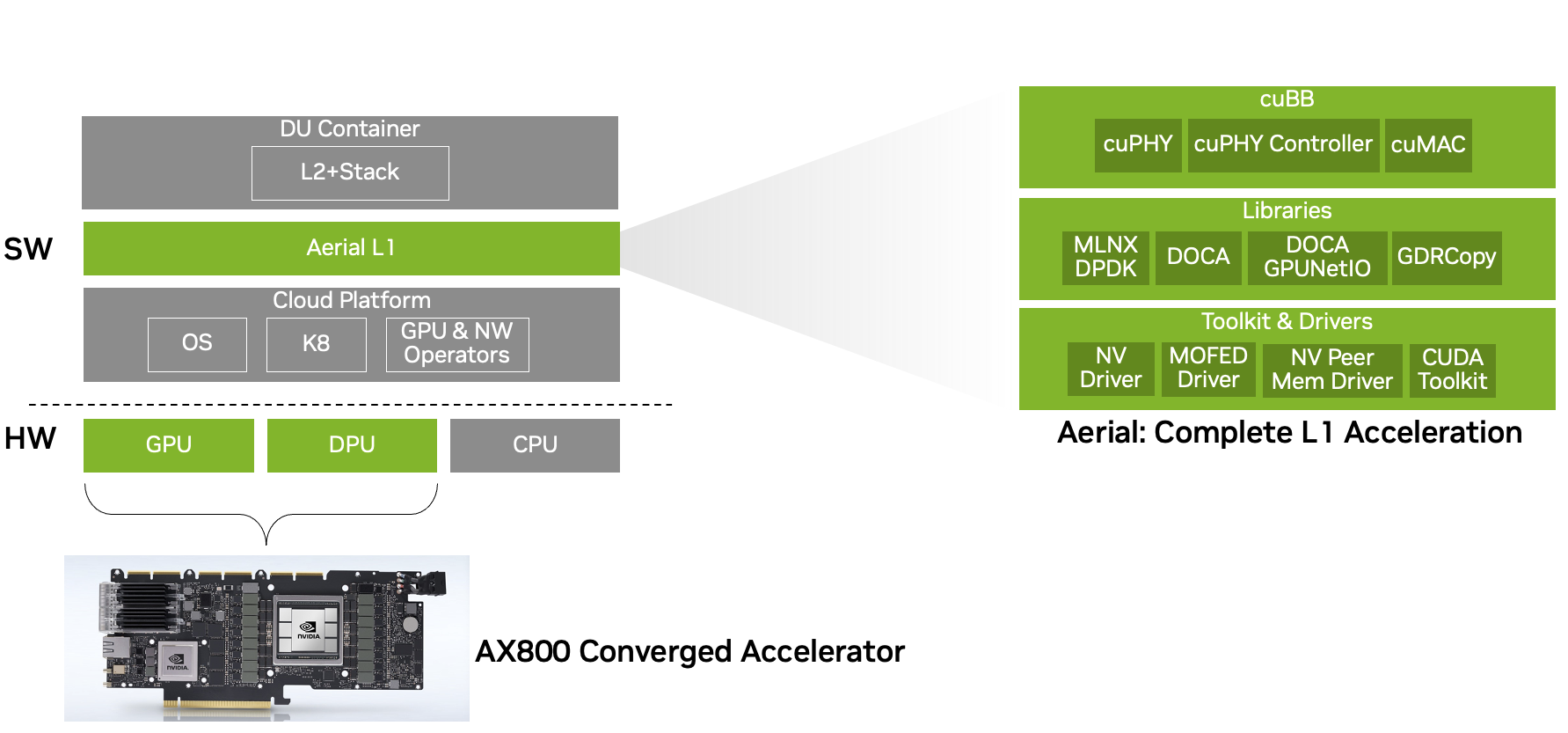

NVIDIA AX800 delivers performance improvements for software-defined 5G

The NVIDIA AX800 converged accelerator delivers 36 Gbps throughput on a 2U server, when running NVIDIA Aerial 5G vRAN, substantially improving the performance for a software-defined, commercially available Open RAN 5G solution.

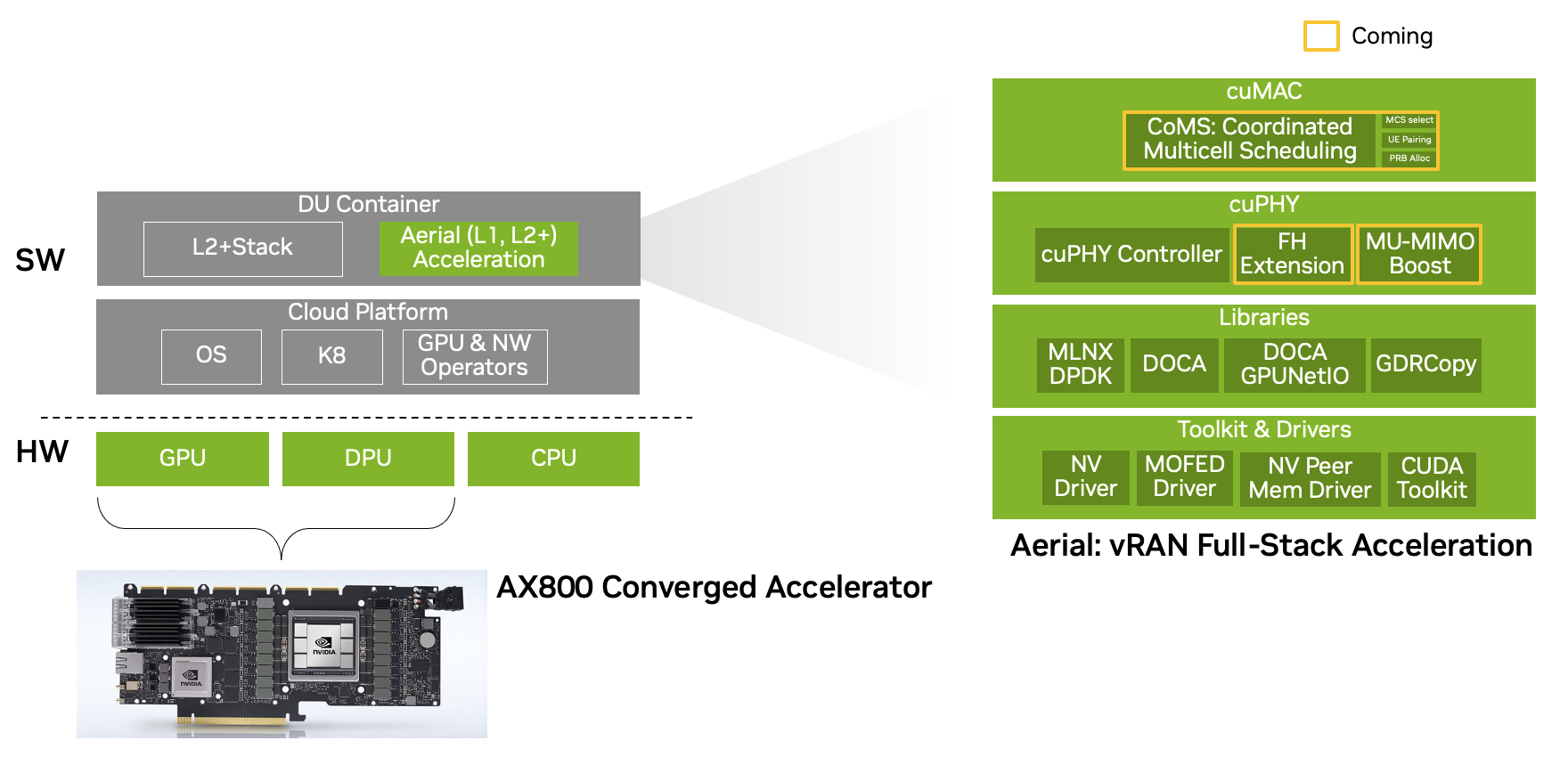

This is a significant performance improvement over the typical peak throughput of ~10 Gbps of existing Open RAN solutions. It compares favorably with the >30 Gbps peak throughput performance on 5G networks that are built in the traditional way. It achieves this today by accelerating the physical layer 1 (L1) stack in the NVIDIA Aerial 5G vRAN (Figure 5). Further performance breakthroughs are in the pipeline as the NVIDIA AX800 can be leveraged for the full 5G stack in the near future (Figure 6).

The NVIDIA AX800 converged accelerator combines NVIDIA Ampere architecture GPU technology with the NVIDIA BlueField-3 DPU. It has nearly 1 TB/s of GPU memory bandwidth and can be partitioned into as many as seven GPU instances. NVIDIA BlueField-3 supports 256 threads, making the NVIDIA AX800 capable of high performance on the most demanding I/O-intensive workloads, such as L1 5G vRAN.

NVIDIA AX800 with NVIDIA Aerial together deliver this performance for 10 peak 4T4R cells on TDD at 100 MHz using four downlink (DL) and two uplink (UL) layers and 100% physical resource block (PRB) utilization. This enables the system to achieve 36.56 Gbps and 4.794 Gbps DL and UL throughput, respectively.

The NVIDIA solution is also highly scalable and can support from 2T2R (sub 1 GHz macro deployments) to 64T64R (massive MIMO deployments) configurations. Massive MIMO workloads with high layer counts are dominated by the computational complexity of algorithms for estimating and responding to channel conditions (for example, sounding reference signal channel estimator, channel equalizer, beamforming, and more).

The GPU, and specifically the AX800 (with the highest streaming multiprocessor count for NVIDIA Ampere architecture GPUs), offers the ideal solution to tackle the complexity of massive MIMO workloads at moderate power envelopes.

Figure 5. The NVIDIA AX800 converged accelerator delivers performance breakthroughs by accelerating the physical layer (Layer 1) of the NVIDIA Aerial 5G vRAN stack

Figure 5. The NVIDIA AX800 converged accelerator delivers performance breakthroughs by accelerating the physical layer (Layer 1) of the NVIDIA Aerial 5G vRAN stack

Figure 6. The NVIDIA AX800 converged accelerator with 5G vRAN full-stack acceleration will extend the performance improvements of the NVIDIA Aerial 5G vRAN stack

Figure 6. The NVIDIA AX800 converged accelerator with 5G vRAN full-stack acceleration will extend the performance improvements of the NVIDIA Aerial 5G vRAN stack

Summary

The NVIDIA AX800 converged accelerator offers a new architectural approach to deploying 5G on commodity hardware on any cloud. It delivers a throughput performance improvement of 36 Gbps for software-defined 5G using the NVIDIA Aerial 5G vRAN stack.

NVIDIA AX800 brings the vision of the RAN-in-the-Cloud closer to reality, offering telcos and CSPs a roadmap to move 5G RAN workloads into any cloud. There they can be dynamically combined with other AI workloads to improve infrastructure utilization, optimize TCO, and boost ROI. Likewise, the throughput improvement dramatically boosts the performance of Open RAN solutions, making them competitive with traditional 5G RAN options.

NVIDIA is working with CSPs, telcos, and OEMs to deploy the NVIDIA AX800 in commercial 5G networks. For more information, visit AI Solutions for Telecom.

Source:: NVIDIA