The need for a high-fidelity multi-robot simulation environment is growing rapidly as more and more autonomous robots are being deployed in real-world…

The need for a high-fidelity multi-robot simulation environment is growing rapidly as more and more autonomous robots are being deployed in real-world scenarios. In this post, I will review what we used in the past at Cogniteam for simulating multiple robots, our current progress with NVIDIA Isaac Sim, and how Nimbus can speed up the development and maintenance of a multi-robot simulation with Isaac Sim.

Multi-robot simulation with Unreal Tournament game engine

About 20 years ago, my friends at Cogniteam and I started our robotic development careers with the idea of a robotic framework for multi-robot task allocation and teamwork. Originally called CogniTAO, a simplified version of this system was later published as ROS decision_making.

At the time, use cases for multiple robots were scarce, and 3D simulation for those robots was not possible. So I wrote a mod for the Unreal Tournament 2000-2004 game engine to enable simulation for four robots. It took our small team of four programmers about 3 years to develop a simulated environment that could reliably run for 15 minutes.

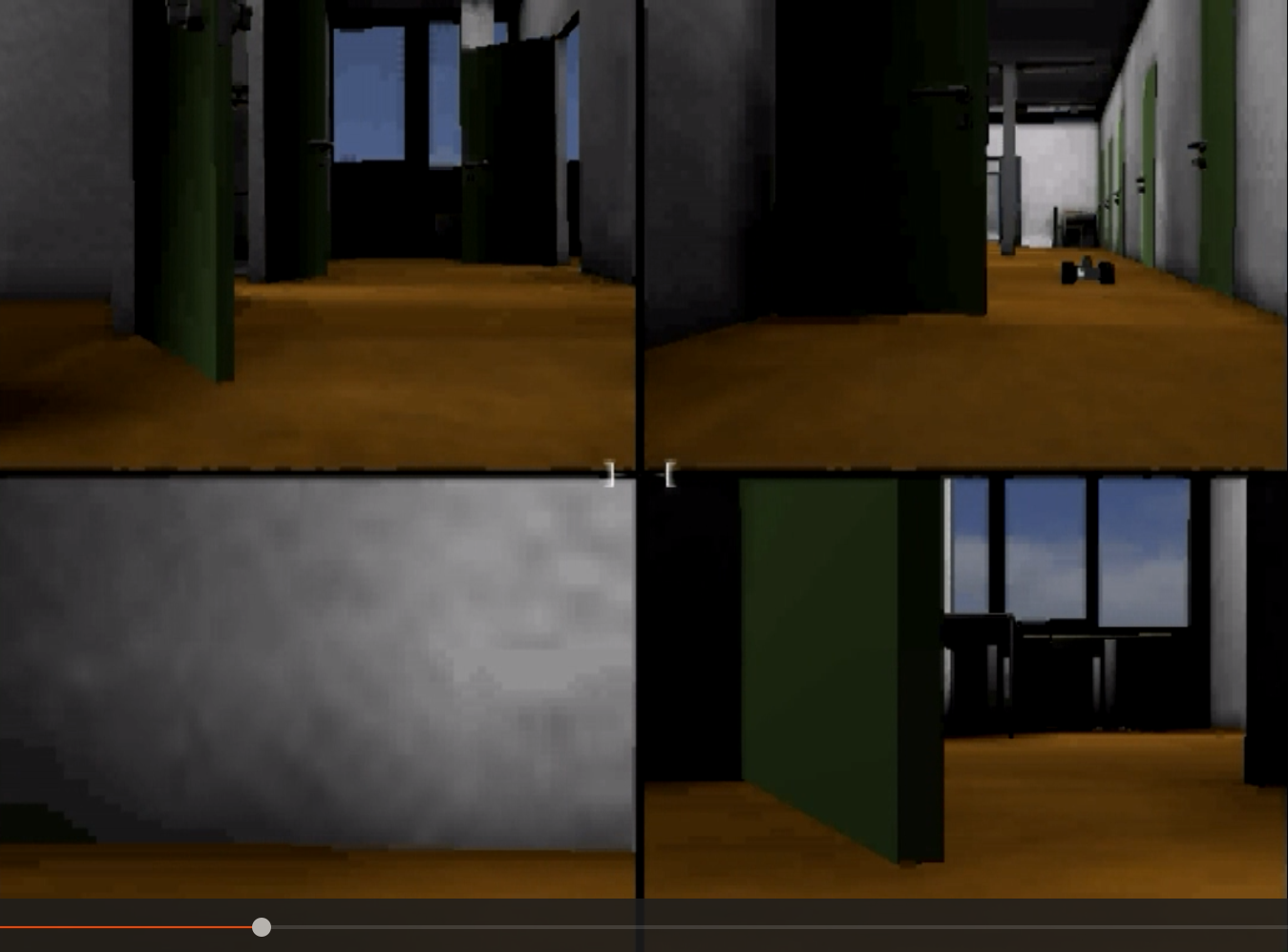

Figure 1. Simulation of four robots (left) and video from the robots (right)

This environment was able to simulate four robots with a camera, Hokuyo LiDAR, odometry, and mapping on five state-of-the-art desktops, and remotely receive video feeds from each. One of our engineers wrote a C++ TCP client that would stream the data on the local network directly from the game engine and display it in fullscreen. We had to run the code in strict order to make the robots spawn on time and in the correct place.

Multi-robot simulation with Gazebo

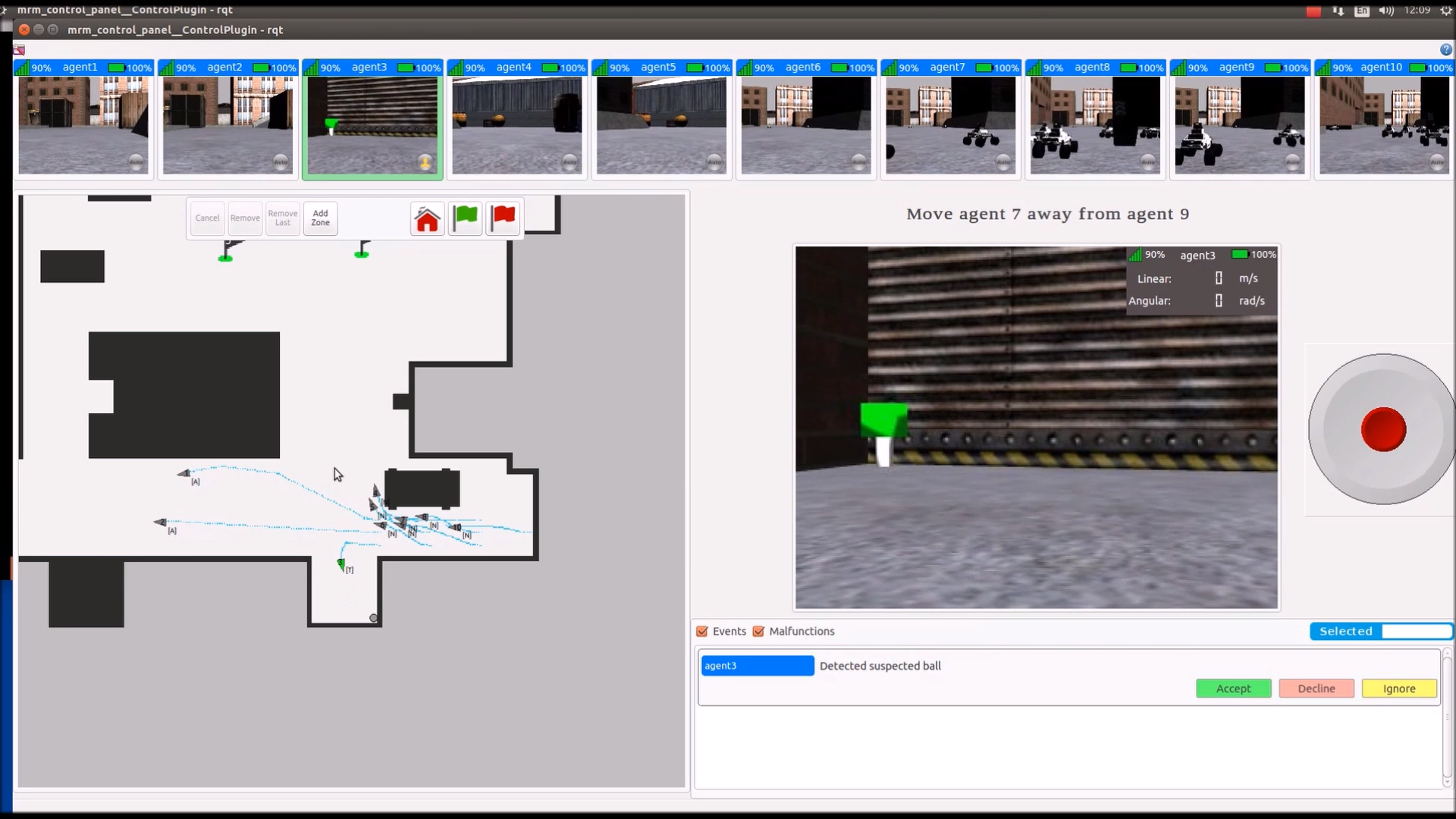

Fast forward 10 years to 2013 when we transitioned our work to Gazebo after it became the de facto platform for robotic simulation. It took three programmers about 2 years to simulate 10 robots on two Intel Xeon machines. They used the ROS move_base navigation stack and object detection using OpenCV Hough Circle Transform—what robotics teams used for demos before TensorFlow. Igor Makhtes, our colleague at the time, built the RQT plugin to control and show data streams from multiple robots (Figure 2). It took him 6 months to complete.

Figure 2. Video feed and map view for 10 robots with RQT plugin

Figure 2. Video feed and map view for 10 robots with RQT plugin

These robots had to communicate with each other, but also needed to operate when a connection was unavailable. To make this possible, each had to run its own ROS master and sync through a ROS multimaster network.

Multi-robot simulation with NVIDIA Isaac Sim

A few months ago, I asked Saar Moseri, a computer science student on our algorithmic team at Cogniteam to set up a multi-robot simulative scenario using the cloud robotics ecosystem Nimbus and NVIDIA Isaac Sim. Our internal test team and I hoped to use the Nimbus agent to control our robots and view the data they generate.

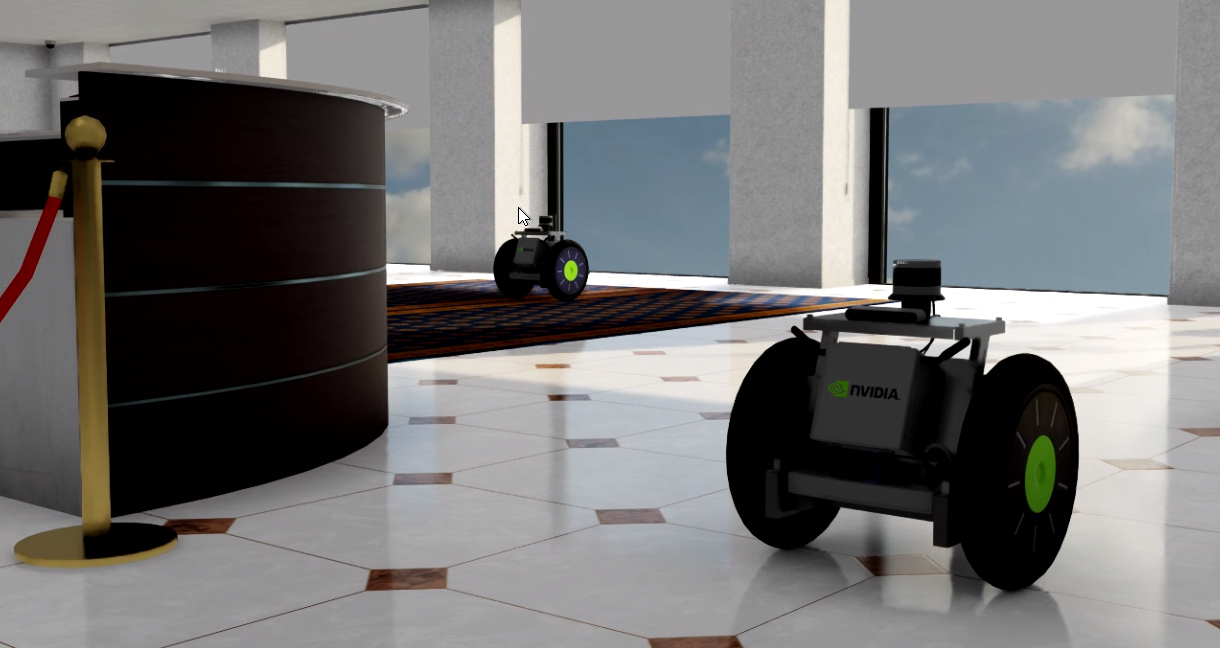

It took Saar about 2 weeks to familiarize himself with the environment and configure the system. Figure 3 shows the result of this effort, running on a standard (single) desktop machine with an NVIDIA GeForce RTX 3080 in the Cogniteam lab.

Figure 3. NVIDIA Isaac Sim multi-robot default setup

Figure 3. NVIDIA Isaac Sim multi-robot default setup

Saar used the Isaac Sim documentation available through NVIDIA NGC to install and set up the environment. Using Nimbus, he installed an agent on the simulation machine and created a gateway node to receive data from the simulation through ROS.

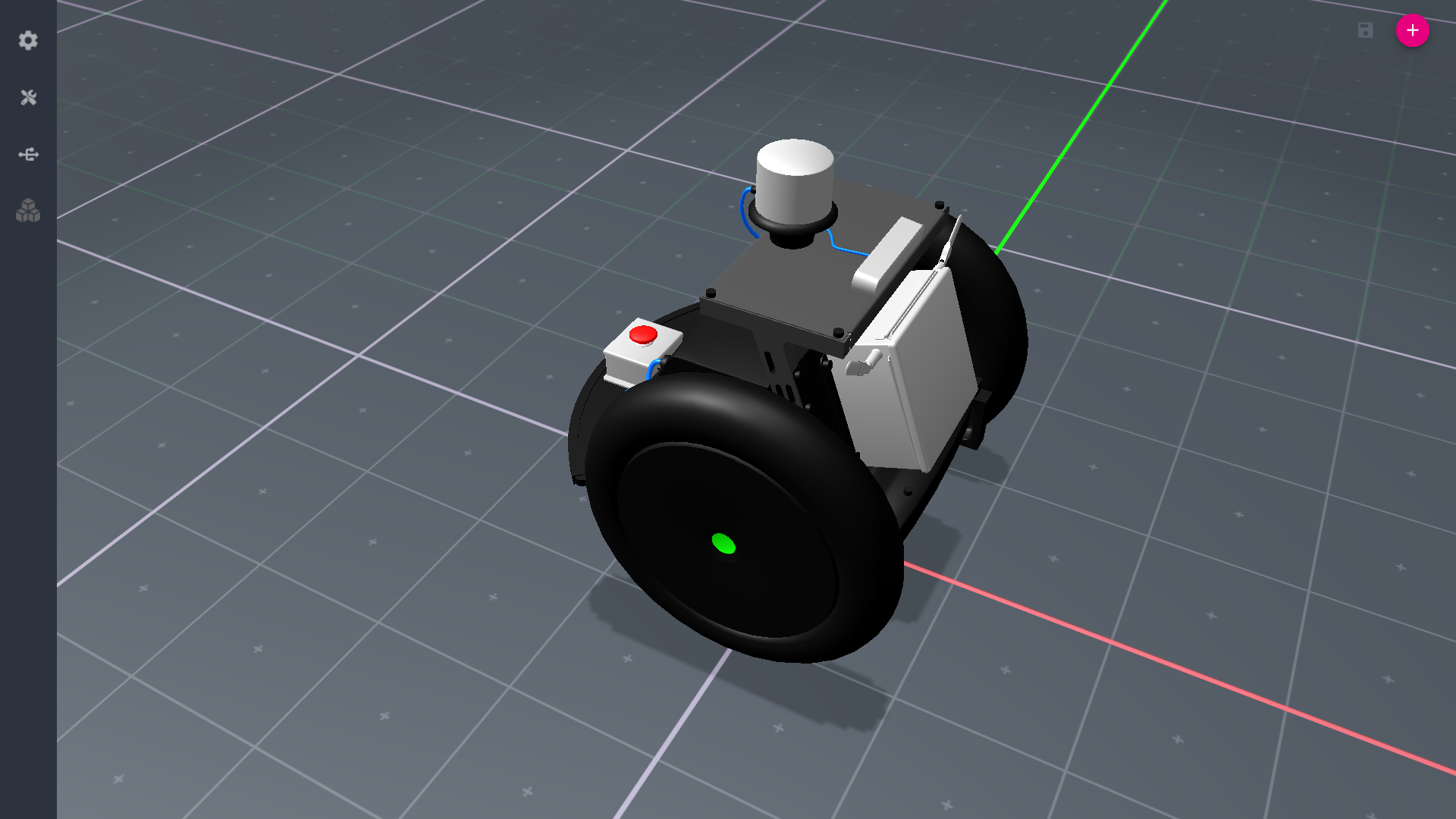

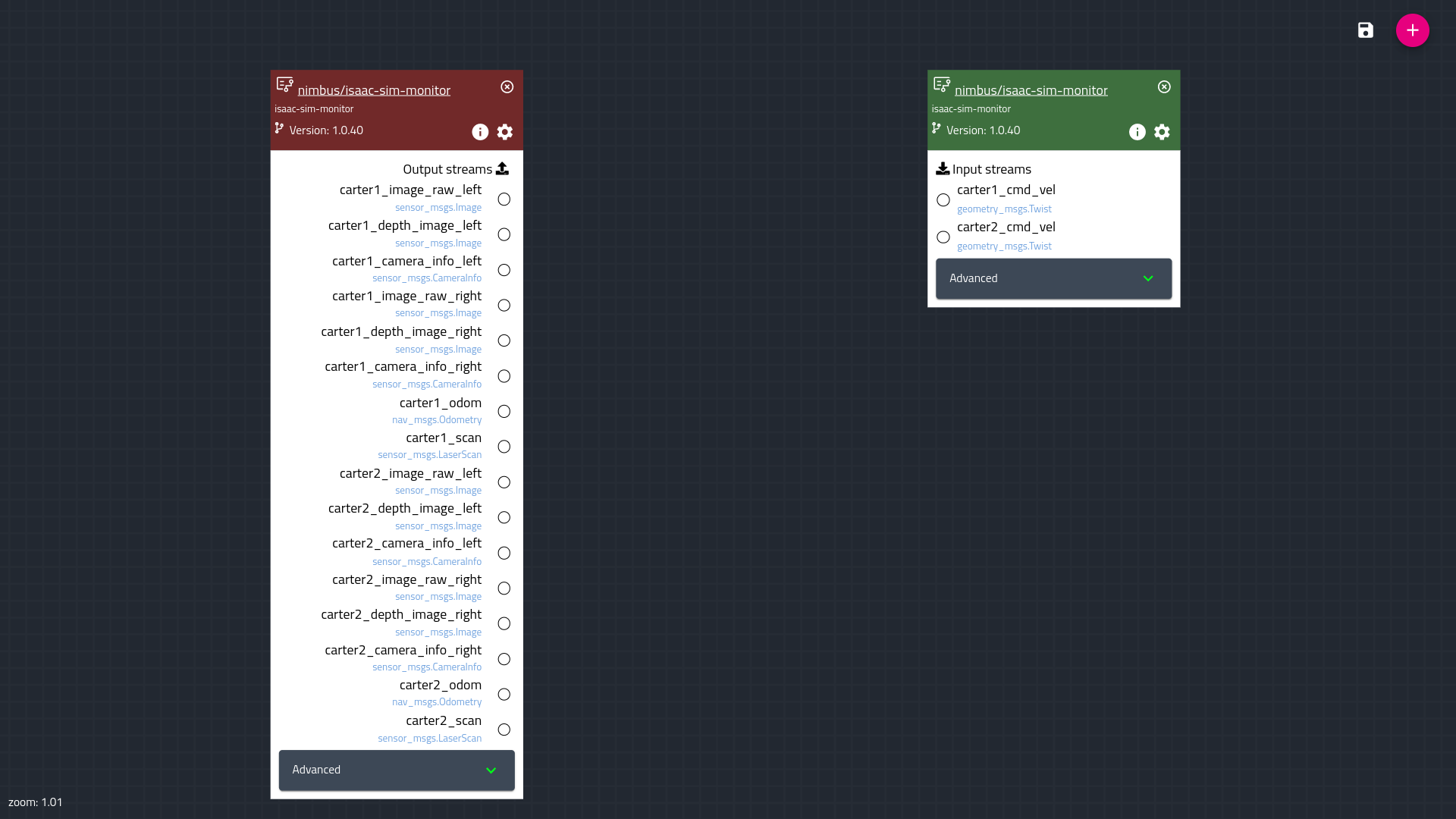

Figure 4. Nimbus robot editor (left) and Nimbus configuration editor (right)

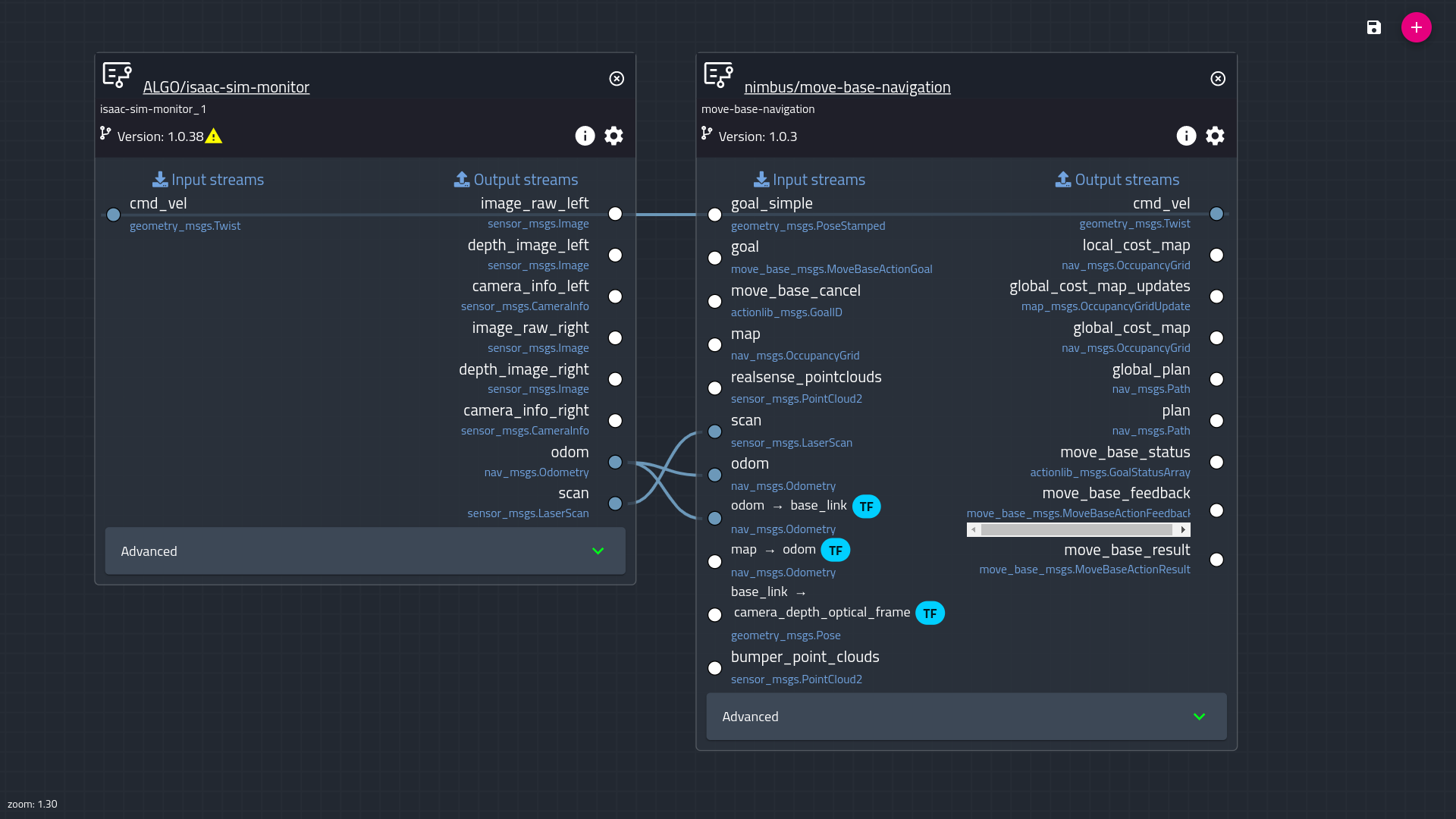

We then created the node configuration shown in Figure 5.

Figure 5. Nimbus simple mission configuration with

Figure 5. Nimbus simple mission configuration with move_base navigation

The two building blocks (already containerized) are a gateway node and a node for move_base navigation. This configuration was deployed to the agent running on the simulation desktop in the Cogniteam lab. Other more complex configurations are also available (with sources) in the Nimbus hub, including nodes for GMapping, path following, and more.

My team and I were stunned by the endless possibilities this approach enables. In the configuration described above, simulated sensory data arrives from Isaac Sim through the ROS gateway, which supports both ROS and ROS 2. View and control capabilities are enabled by Nimbus.

Out of the box, this setup enables our team to carry out basic simulation tasks and simulate the control of a robot fleet locally in our lab, along with many more capabilities. We can now record simulated runs and sensory data from robots, remotely SSH into a simulation machine, monitor simulation data globally, and even send email and SMS notifications about simulation progress to our validation team—all from a web browser.

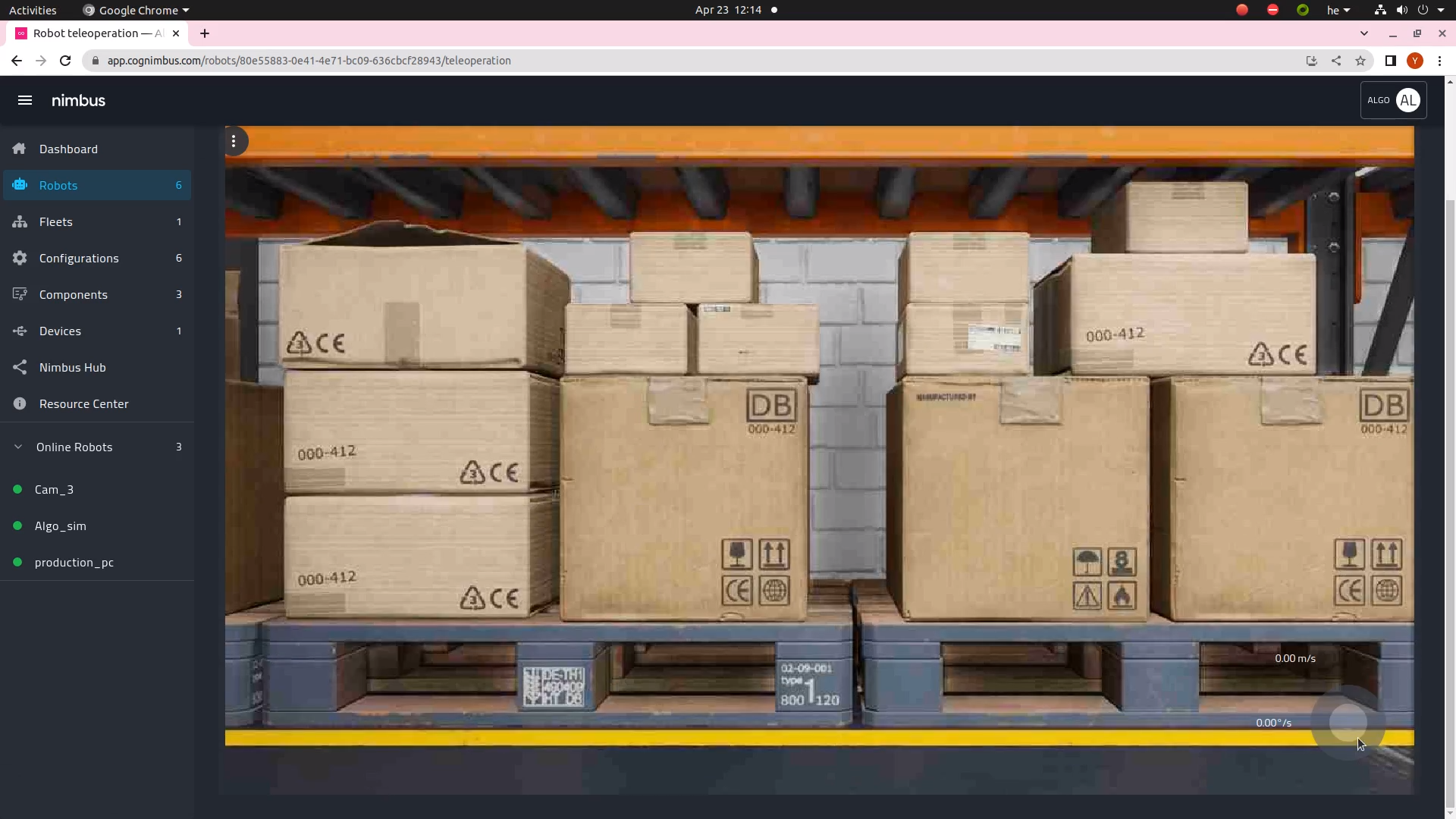

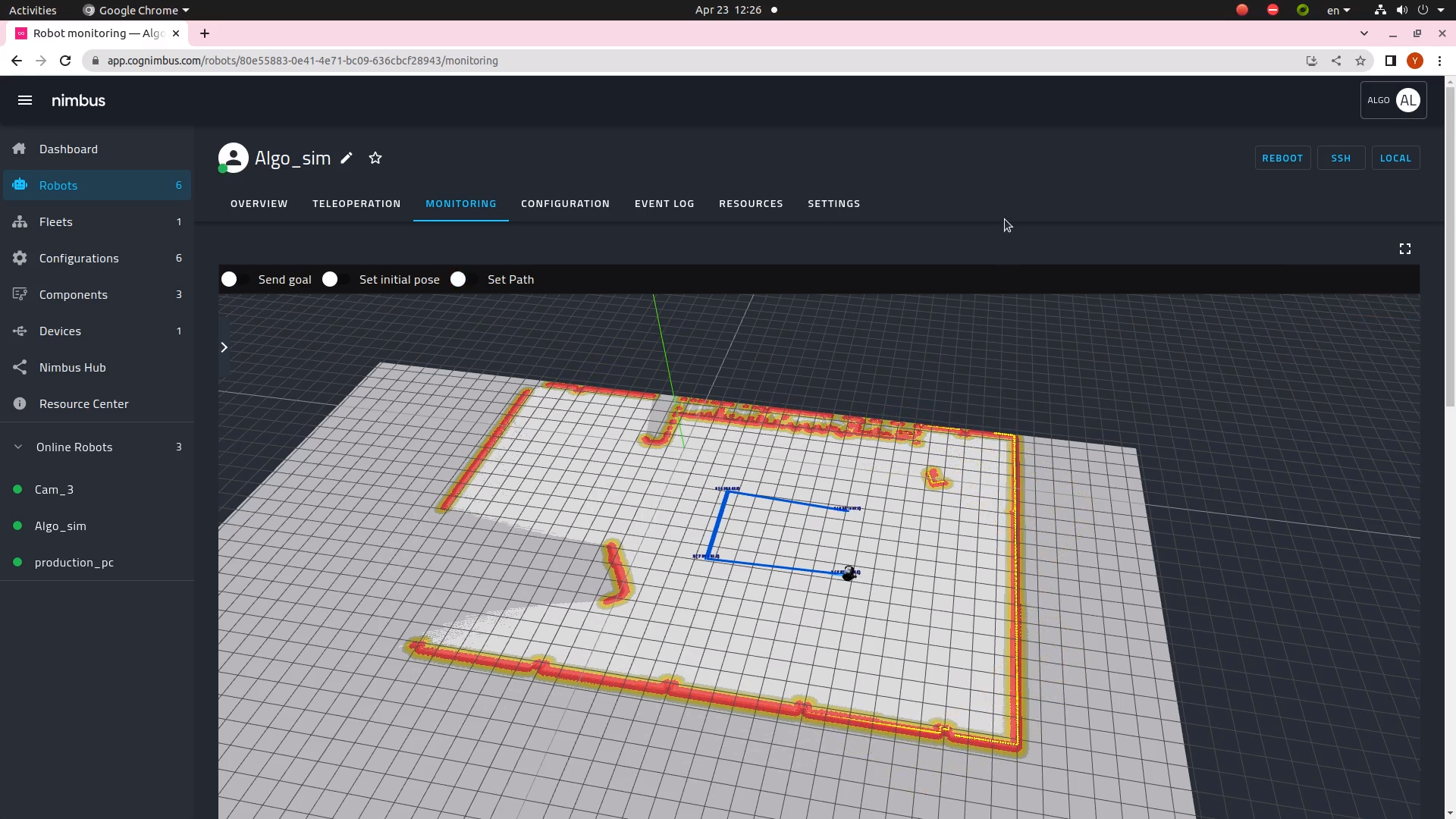

Combining Isaac Sim with Nimbus results in a unified system that is similar in features to available cloud simulation offerings, but runs on a local machine and does not involve additional cloud simulation compute costs. Additionally, it opens new cutting-edge simulation flows, such as simulation with hardware in the loop. This is not possible when the simulation runs in the cloud. Figure 6 shows how the control, navigation, and mapping look in Nimbus.

Figure 6. Nimbus robot WebRTC video monitoring (left) and Nimbus map view and autonomy control (right)

To replicate the setup described, reference the Isaac Sim documentation. Then visit Nimbus to create a free account, log in, and follow the instructions to create a robot using a free license.

After the robot agent is installed on the same desktop that Isaac Sim is running headless, you will be able to provision the simulation through remote SSH and monitor the simulation machine from the Nimbus website.

Video 1. Nimbus and NVIDIA Isaac Sim demo video

Visit the Nimbus hub to deploy the Isaac Sim configuration. Since everything is already containerized (including Isaac Sim) and control is browser-based, you do not need to install any applications. The agent on the machine will set up everything needed to execute.

Then, on the monitor page of that agent, add monitoring for any data that is relevant to your setup. In the agent settings, you can define notifications by adding conditions on ROS streams such as:

“if GoalStatus == ABORTED”

send sms/mail to simulation@your-company.comCogniteam is happy to help you in the process. You can reach us at support@cogniteam.com.

Summary

For the successful deployment of autonomous robots, simulation is key. Running the same scenario multiple times is crucial for testing, but multi-robot simulations differ. Developing a high-fidelity multi-robot simulated environment is complex and takes time, but it can be simplified with NVIDIA Isaac Sim and Nimbus, as described in this post.

My team and I will be attending ICRA 2023 in London, May 29 to June 2 (Booth C22), showcasing our browser interface to robots and simulations running remotely in Israel.

To learn more about Isaac Sim, check out the NVIDIA Developer Isaac ROS Forum.

Source:: NVIDIA